In an era where artificial intelligence (AI) is reshaping our world, the conversation around data privacy and personal security has never been more critical. AI’s potential to revolutionize industries—from healthcare and finance to transportation and entertainment—is undeniable. However, this incredible innovation often comes hand-in-hand with significant privacy concerns. As we journey from 2020 into early 2025, understanding the balance between AI-driven advancements and safeguarding our personal data is not just important—it’s essential.

The AI Revolution: An Exciting Frontier

AI’s capability to process vast amounts of data has led to breathtaking breakthroughs. Imagine personalized medicine tailored precisely to your genetic makeup or self-driving cars safely navigating our streets. AI’s applications are nearly endless, enhancing efficiency, productivity, and the overall quality of life.

Yet, with great power comes great responsibility. The question remains: how can we harness AI’s potential without compromising data privacy and security?

The Growing Concern: Data Privacy at Risk

Privacy has always been a hot-button issue, but AI’s rapid advancement has intensified the debate. According to a 2023 study by Pew Research Center, approximately 64% of Americans expressed concerns over how their data is being used by companies implementing AI. In the UK, the Information Commissioner’s Office (ICO) reported that 58% of citizens believe AI poses significant risks to privacy.

Similarly, in Canada, a survey by the Office of the Privacy Commissioner revealed that 73% of Canadians worry about AI making decisions based on their personal data without clear consent. Australia isn’t far behind, with the Office of the Australian Information Commissioner (OAIC) noting that 61% of Australians are concerned about AI-driven surveillance.

These statistics are more than just numbers—they reflect growing unease among the public. It’s not just about privacy; it’s about trust. And trust, once lost, is incredibly challenging to regain.

Navigating AI and Data Privacy Regulations

Countries are responding to these concerns by enacting stringent privacy laws. The European Union’s General Data Protection Regulation (GDPR) set a global benchmark in data protection. Following suit, the UK retained similar protections post-Brexit, while in 2022, Canada introduced significant updates to the Personal Information Protection and Electronic Documents Act (PIPEDA). Australia’s Privacy Act also saw considerable amendments in 2023, tightening restrictions around data handling by AI systems.

In the United States, while there isn’t a comprehensive federal law yet, individual states have stepped up. California’s Consumer Privacy Act (CCPA) and Virginia’s Consumer Data Protection Act (CDPA) are leading examples, illustrating an evolving landscape where data protection and AI ethics are becoming legislative priorities.

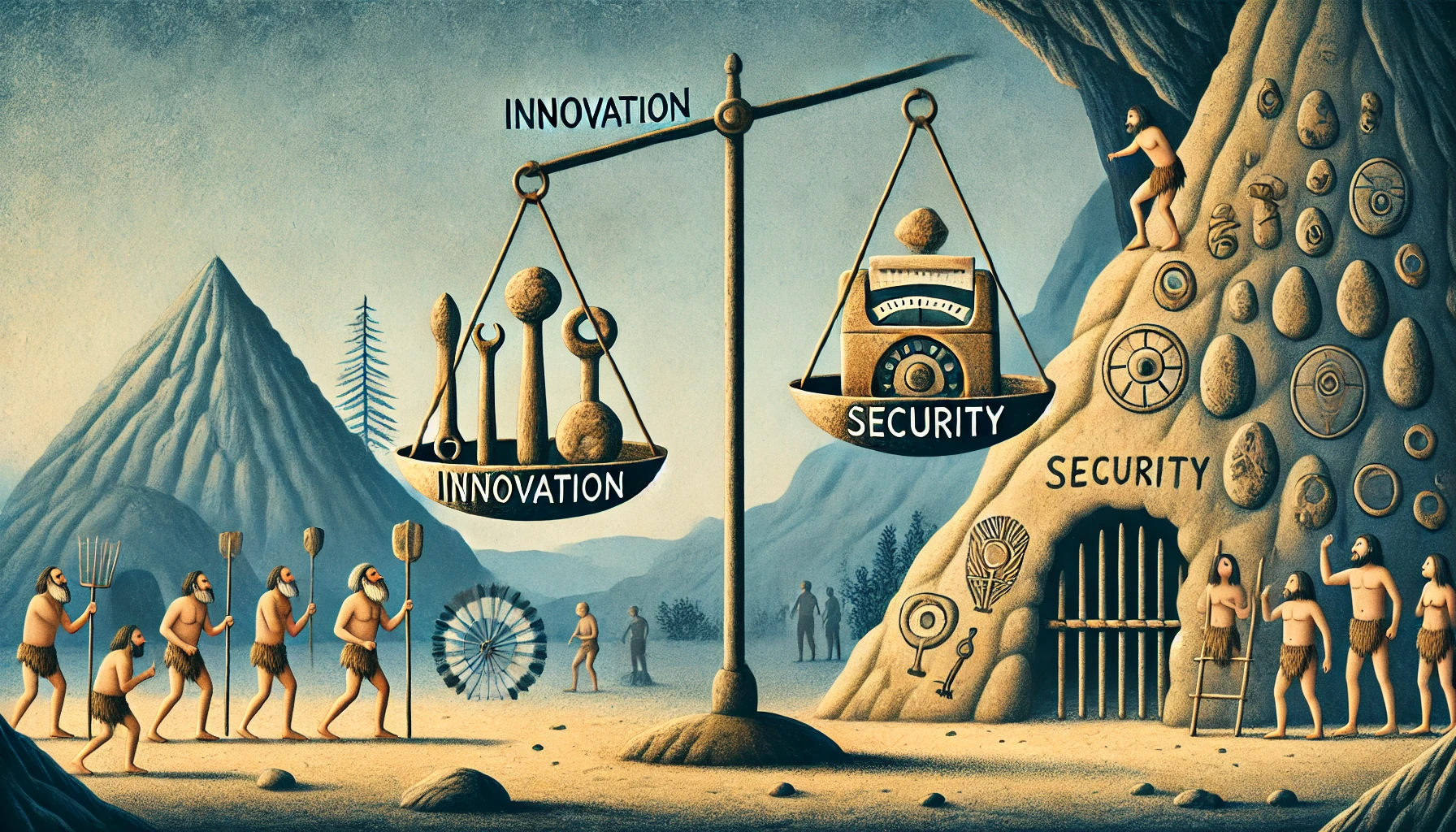

Striking the Balance: Innovation Meets Security

Balancing AI innovation with personal security involves ethical AI practices, transparent data policies, and robust cybersecurity measures. Companies innovating with AI must embed privacy by design, ensuring that data protection is integral to their technology.

Tech giants like Google, Apple, and Microsoft are investing heavily in AI ethics boards, privacy-preserving technologies, and user consent frameworks. For instance, Apple’s privacy labels introduced in 2021 offer users clear insights into how their data is utilized, a move widely praised by privacy advocates.

Humanizing AI: A People-Centric Approach

At the heart of the AI-privacy debate lies the need for a humanistic approach. AI should serve humanity, enhancing lives without intruding on individual autonomy. AI developers must prioritize ethical considerations, ensuring that AI decisions are transparent, fair, and accountable.

Imagine a future where AI respects your digital boundaries just as your best friend respects your personal space. A future where your smart assistant anticipates your needs but doesn’t snoop into your private conversations. Achieving this involves continuous dialogue among policymakers, technologists, and, crucially, the public.

Education and Awareness: The Key to Empowerment

Educating the public on AI and data privacy is essential. A 2024 survey by Deloitte found that 71% of Canadians felt more confident about AI when adequately informed about privacy safeguards. Public awareness campaigns, transparent company practices, and clear governmental guidelines are critical to demystifying AI’s complexities and empowering users.

When individuals understand their rights and how their data is used, they become active participants in protecting their privacy. This empowerment is a potent tool in balancing innovation and security.

The Road Ahead: Responsible Innovation

Looking forward to the latter part of 2025 and beyond, the AI landscape will likely be even more integrated into daily life. From smart cities in Australia to AI-driven healthcare systems in the US and automated financial advisors in the UK and Canada, AI’s role will only expand.

However, with responsible innovation—backed by robust regulatory frameworks and ethical AI practices—this future can be both exciting and secure. The challenge is substantial, but the reward of achieving this balance is invaluable.